research

About my research:

I have a background in behavioural ecology and sensory ecology, asking questions such as how an animal’s cognition or appearance to other animals affects how they interact with their environment, and how this in turn affects their evolution (see my publications).

I’m currently a NERC Independent Research Fellow, investigating how the light environment and a habitat’s three-dimensional structure affect an animal’s camouflage, see here for more info.

Prior to my fellowship I worked as a postdoctoral research associate on a BBSRC grant to Martin Stevens and John Skelhorn, investigating how easily predators can learn and switch between different camouflage strategies. I tested this using a series of touch-screen experiments on humans and laboratory chickens, training them to find computer generated “moths” that are concealed with different types of camouflage.

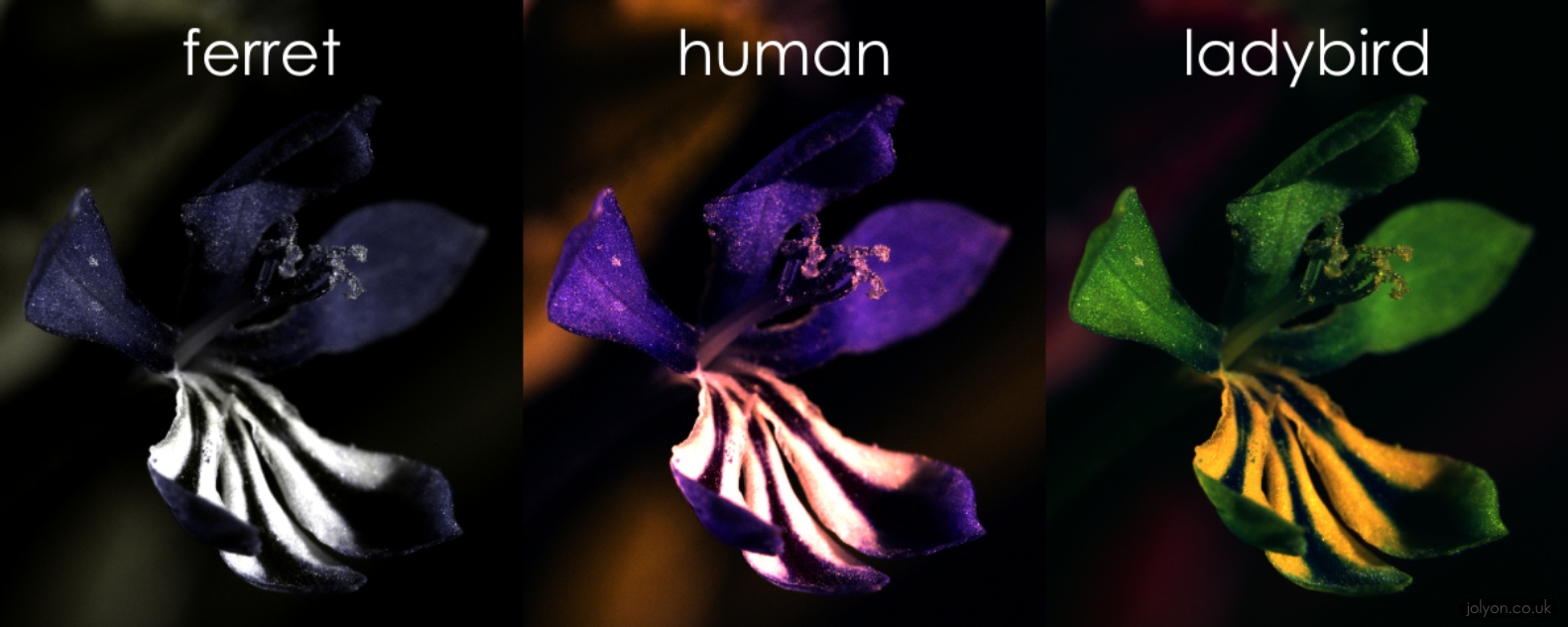

Combining my interests in photography, image analysis and animal vision has led me to develop new tools and methodologies for using standard consumer digital cameras as multispectral imaging tools for scientific purposes. This includes ultraviolet photography, and models that can convert from “camera vision” to “animal vision” (see the Image Analysis Toolbox).

Combining my interests in photography, image analysis and animal vision has led me to develop new tools and methodologies for using standard consumer digital cameras as multispectral imaging tools for scientific purposes. This includes ultraviolet photography, and models that can convert from “camera vision” to “animal vision” (see the Image Analysis Toolbox).

My previous work included a postdoc with Martin and Claire Spottiswoode investigating how camouflage affects the survival of animals in the real world, using ground-nesting birds in Zambia as a model. We discovered that pattern and contrast matching were the most important cues that gave away the location of nests to predators, and that each female could choose where to create her nest based on the appearance of her own plumage, or the appearance of her clutch.

For my PhD I focussed on the ecological significance of tool-use in New Caledonian crows. Using methods from animal-borne video cameras to isotope analysis and motion-triggered cameras, I contributed to our understanding of the importance of tool-use to the crows’ ecology and survival in this secretive species. For example, the isotope analysis suggested that over 1/3rd of the crows’ daily energy requirement could depend on tool-use, while animal-borne cameras revealed previously unknown foraging strategies and food sources. My research into the New Caledonian crows’ vision also highlighted how eye and bill morphology could have evolved to enable tool-use at the expense of other ecological pressures on their morphology such as accessing carrion.

For my PhD I focussed on the ecological significance of tool-use in New Caledonian crows. Using methods from animal-borne video cameras to isotope analysis and motion-triggered cameras, I contributed to our understanding of the importance of tool-use to the crows’ ecology and survival in this secretive species. For example, the isotope analysis suggested that over 1/3rd of the crows’ daily energy requirement could depend on tool-use, while animal-borne cameras revealed previously unknown foraging strategies and food sources. My research into the New Caledonian crows’ vision also highlighted how eye and bill morphology could have evolved to enable tool-use at the expense of other ecological pressures on their morphology such as accessing carrion.

Here’s a photo of me with human-visible colours on the left, and UV on the right. Many other species can see UV light, so we need to use special cameras, filters and computer modelling to ‘see’ the world through the eyes of another species.

Camouflage is a prey species’ first line of defence and the scientific field has been expanding over the past few years to investigate various evolutionary and ecological questions. I am currently employing a range of field and lab experiments to study camouflage; investigating how specialist versus generalist camouflage strategies help to conceal bird eggs in the wild, and how the precise location chosen to lay the eggs affects their survival. Computer-based experiments on humans are testing whether so called “distractive” markings can draw a predator’s attention away from the tell-tale outline of a prey species.

The growth in sensory ecology has in part been driven by advances in computers and digital imaging systems. I have developed and improved the tools used to model the visual systems of animals from digital images, streamlining the software in open-source platforms and developing new methodologies for calibrating digital cameras so that their photos can be converted to animal-vision. Currently these methodologies are being put into practice by the Exeter University sensory ecology group and collaborators in Cambridge University and elsewhere, however these tools will be invaluable not only to the expanding field of sensory ecology, but also in practical applications such as the military, road safety, public planning and advertising industries.

I photographed this flower in visible and UV light, then ran the images through my colour vision models for different species. Ferrets can see a little more into the UV than us, but only see in two colours. They really don’t miss much in this scene compared to us humans with three colours. But the ladybird vision shows how this flower is trying to signal to insects with deeper UV vision, hoping to attract them in order to spread their pollen.

Be First to Comment